NatSec Crisis Plan? You guessed it, it’s a secret

For a year, the government refuses to approve or reject the existence of the ‘use of the’ executive government ‘use plan in case of a serious national security event; An important terrorist attack or an hostile state that uses chemical, biological or nuclear weapons against us.

While even the front cover that accepts the existence of the plan is known, we have officially confidentiality deception continuity.

The front cover of the continuity of the executive government plan (Source: FOI)

When MWM Firstly, Michael Crawford, Michael Crawford, Prime Minister and the National Preparatory Branch of the Ministry of Cabinet (PM & C), refused to accept the existence of the plan.

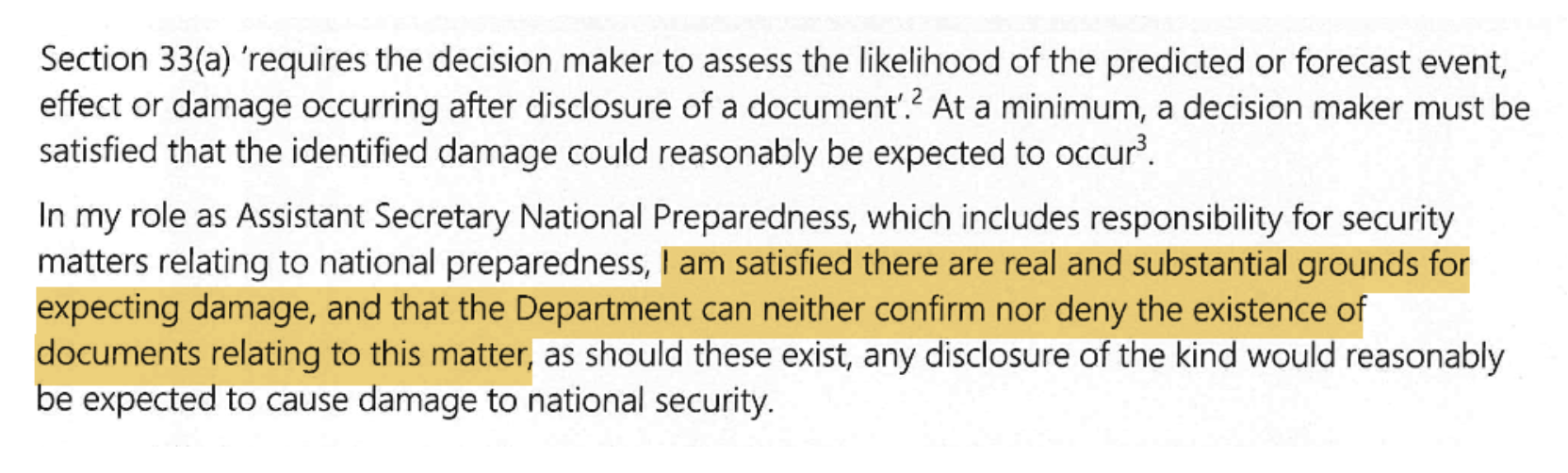

Michael Crawford’s Reason (Source: FOI)

When the MWM calls an internal review of the FOI decision, Janet Quigley, the first secretary of the Department of Statches and Crisis Management in PM & C, did not approve or reject its original decision.

It is beyond the belief that a continuity plan knowledge can do anything other than creating confidence that we are ready to be more protected for Australians.

National security is important. An important element of any planning is common sense. Unfortunately, we have not seen most of them lately.

Rex Patrick’s Federal Court won the victory for transparency and loss of state privacy

Rex Patrick is a former senator of South Australia and a submarine in the armed forces. Rex, known as the best fight against corruption and transparency crusaders, “Transparent warrior. “